Introducing Hyperscalers SQL Agent: A Secure, Air-Gapped, and Locally Deployed AI-Powered Database Interface

Breaking the SQL Barrier with Natural Language

For years, working with databases has required a solid understanding of SQL syntax, making data access a challenge for non-technical users. What if querying a database was as easy as asking a question? Hyperscalers SQL Agent addresses this by leveraging large language models (LLMs) to transform natural language queries into SQL statements, eliminating the need for users to manually craft complex database queries.

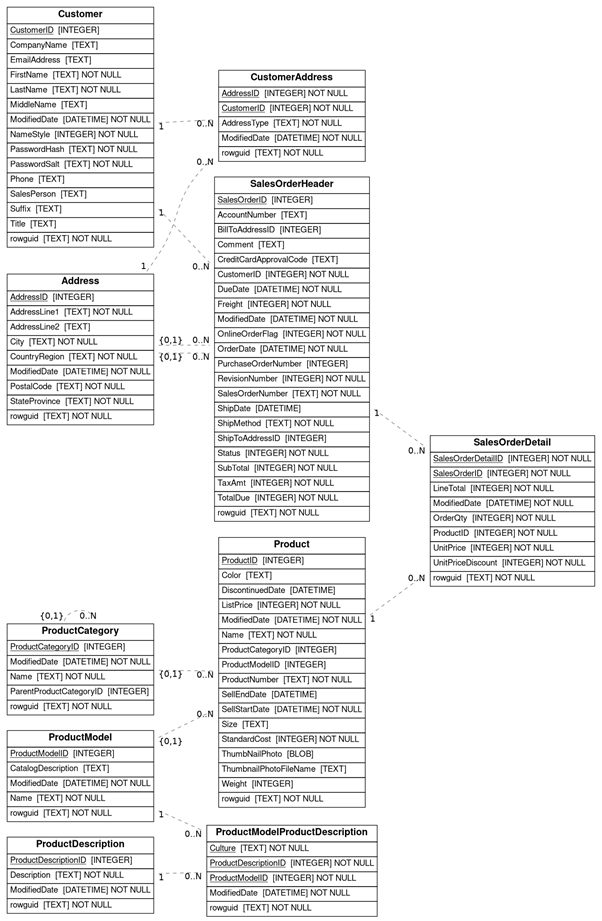

In this demonstration, we use AdventureWorks-SQLite, a lightweight version of the well-known AdventureWorks database, adapted for SQLite. This database represents a simplified business scenario with tables covering customers, orders, products, and employees. Below is an overview of its key schema:

- Customer: Stores customer information such as name, contact details, and company affiliation.

- SalesOrderHeader & SalesOrderDetail: Tracks orders, their statuses, and itemized details.

- Product: Contains product listings with pricing and categorization.

- Address: Holds address records linked to customers and orders.

ProductCategory & ProductModel: Organizes products into categories and models.

By integrating this structured database with Hyperscalers SQL Agent, we demonstrate how natural language processing (NLP) can revolutionize database interactions.

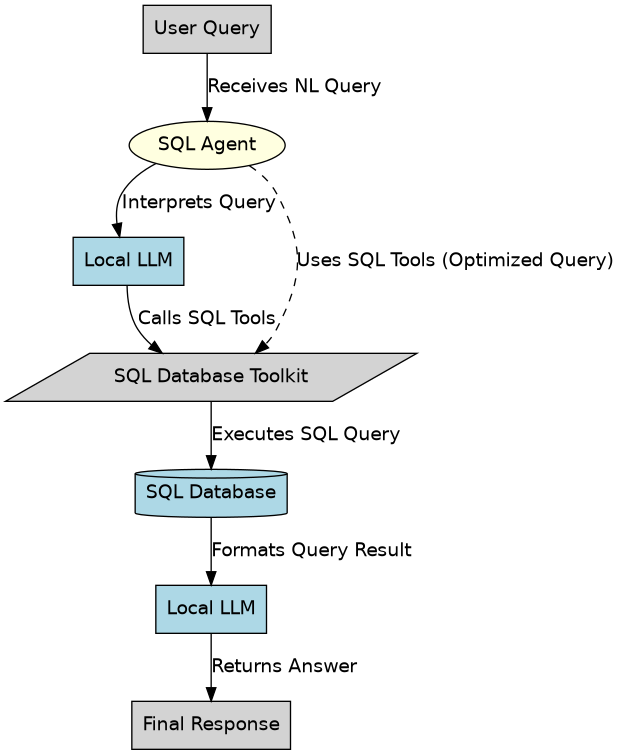

How It Works

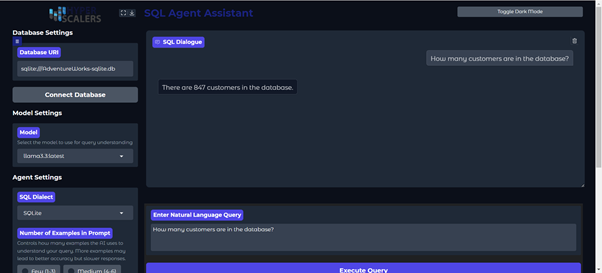

The Hyperscalers SQL Agent integrates an LLM-powered SQL generation engine with an intuitive web UI. Users enter natural language queries, and the system translates them into optimized SQL commands, retrieves the relevant data, and presents the results in a structured format.

For example, when a user asks, "How many customers are in the database?", the system generates and executes a structured SQL query to retrieve the answer.

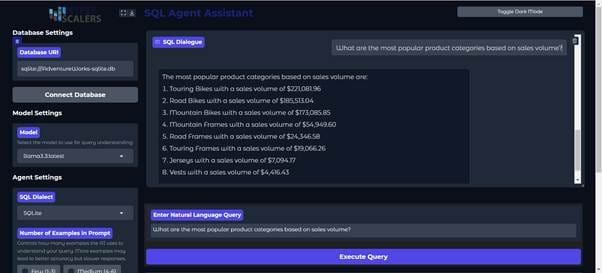

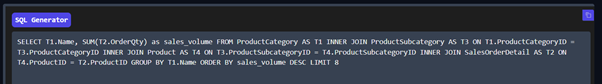

Similarly, when asked, "What are the most popular product categories based on sales volume?" the system processes the request and generates the appropriate SQL query. The response is displayed in an intuitive interface, showing the extracted information.

The process follows these steps:

- The user inputs a natural language query, such as "What are the most popular product categories based on sales volume?"

- The LLM interprets the request and generates the corresponding SQL query.

- The query is executed on the database to retrieve results.

- The LLM formats the response and displays it in a human-readable format.

Key Features

1. Conversational Database Interaction

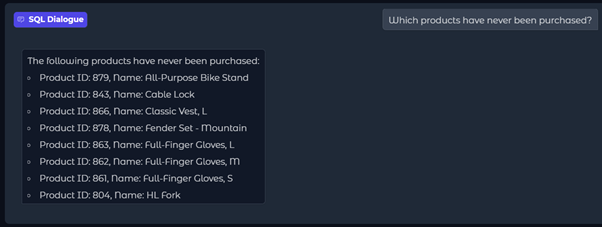

Instead of writing SQL, users can ask questions in plain language. For example:

- "Which products have never been purchased?"

- "Export the top 5 customer contact information to a CSV file.

The AI understands the intent and generates the necessary SQL query.

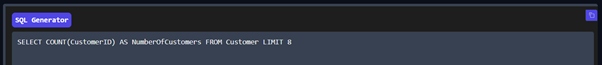

2. Real-Time SQL Generation and Execution

Every query is instantly converted into SQL behind the scenes. Users can view, copy, and refine the generated SQL, allowing both beginners and advanced users to work with full transparency and control.

Copy AI-Generated Queries

In the SQL Generator, users can directly copy the AI-generated query by clicking the copy button. This allows developers and analysts to reuse, modify, or refine queries in external SQL tools or scripts.

3. Intuitive Web UI

The Hyperscalers SQL Agent web UI provides:

- Easy database connectivity

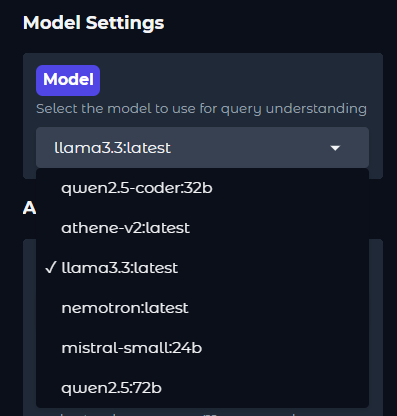

- Model selection for better query understanding

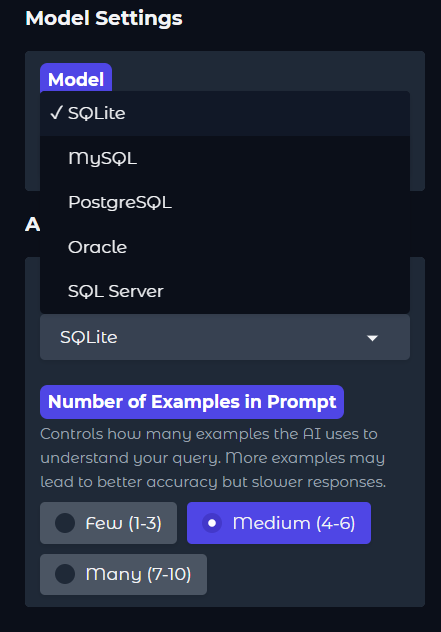

- SQL dialect selection (SQLite, MySQL, PostgreSQL, etc.)

- Configurable AI prompt settings

Users can connect to a database, select a model, and start querying in just a few clicks.

4. Full Control Over Data Privacy

Unlike cloud-based AI solutions that send data to remote servers, Hyperscalers SQL Agent runs entirely on local infrastructure. This ensures that sensitive data remains within the organization, making it a viable option for industries with strict compliance requirements, such as finance, healthcare, and government sectors.

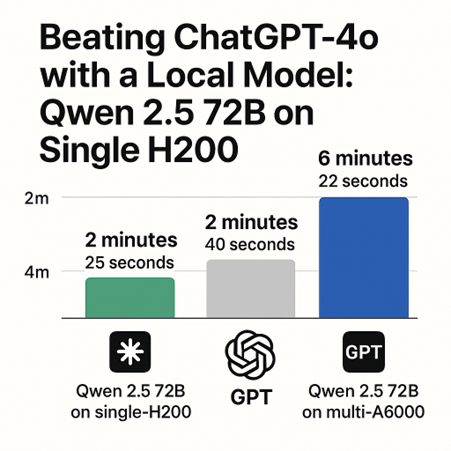

Beyond ensuring data privacy, running a local large language model provides greater control and customization. Organizations can fine-tune the model to better understand industry-specific terminology and queries, improving its accuracy for specialized applications. Additionally, local deployment eliminates network latency, resulting in faster response times—especially beneficial for high-concurrency and real-time use cases. As hardware costs decrease, businesses can leverage local compute resources to achieve cost-effective and scalable AI solutions.

Hyperscalers is developing a proprietary SQL LLM optimized for enterprise use, offering enhanced query understanding, adaptability across databases, and superior SQL generation to enable seamless, intelligent, and secure database management.

5. Multi-Database Support

The system supports multiple SQL dialects, allowing users to connect to:

- SQLite

- MySQL

- PostgreSQL

- Oracle

- SQL Server

Users can switch between database connections seamlessly without changing their workflow.

Why It Matters

- No More SQL Learning Curves – Users, regardless of technical background, can retrieve data effortlessly.

- Enhanced Productivity – Business teams can access data without waiting for IT or database administrators.

- Error-Free Queries – AI-generated SQL reduces mistakes and optimizes queries for better performance.

- Faster Decision-Making – Instant access to insights leads to more efficient business strategies.

The Future of Database Interactions

With Hyperscalers SQL Agent, database access becomes more intuitive and efficient. The integration of LLMs with SQL simplifies data interactions and accelerates decision-making without compromising security.

What are your thoughts on AI-powered SQL querying? Have you used similar tools before? Share your experiences in the comments below.