Why Inference and Train LLM and LMM within your private Network?

• As people move on to other organisations, they take the knowledge and IP with them. By inferencing and training generative AI: Large Language Models and Large Multi Models inside the network there is a means to retain that knowledge base and IP so it is never lost.

• Gaining the ability to train own AI models means IT can generate value for business with every process and workflow that is streamlined, fully automated and / or quality assured. Ultimately this increases the value of the business as it can do more with less.

• Ensure compliance with data sovereignty, protection and privacy regulations by avoiding sending of enterprise data and IP to external proprietary LLMs outside your network. The external AI models will be trained using your users’ interaction with these models by which stage your organization's IP and knowledge is lost.

• Security: Safeguard your infrastructure, data and applications with state-of-the-art security and provided with an own AI model that can perform Cyber Assurance from the added safety of your network.

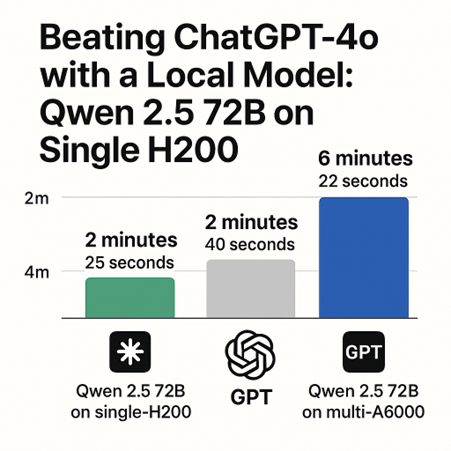

• Cost-Effectiveness: Benefit from cost-effective solutions without compromising on quality or security.

• Latency and bandwidth: The explosion of data has made transferring very large data sets via the internet very time consuming and costly to do; inevitably leading to poor GPU utilisation and energy inefficiencies.